PRODUCTS

DaVinSy Agents

DAVINSY DATA-DRIVEN AIoT CONTROL SYSTEM

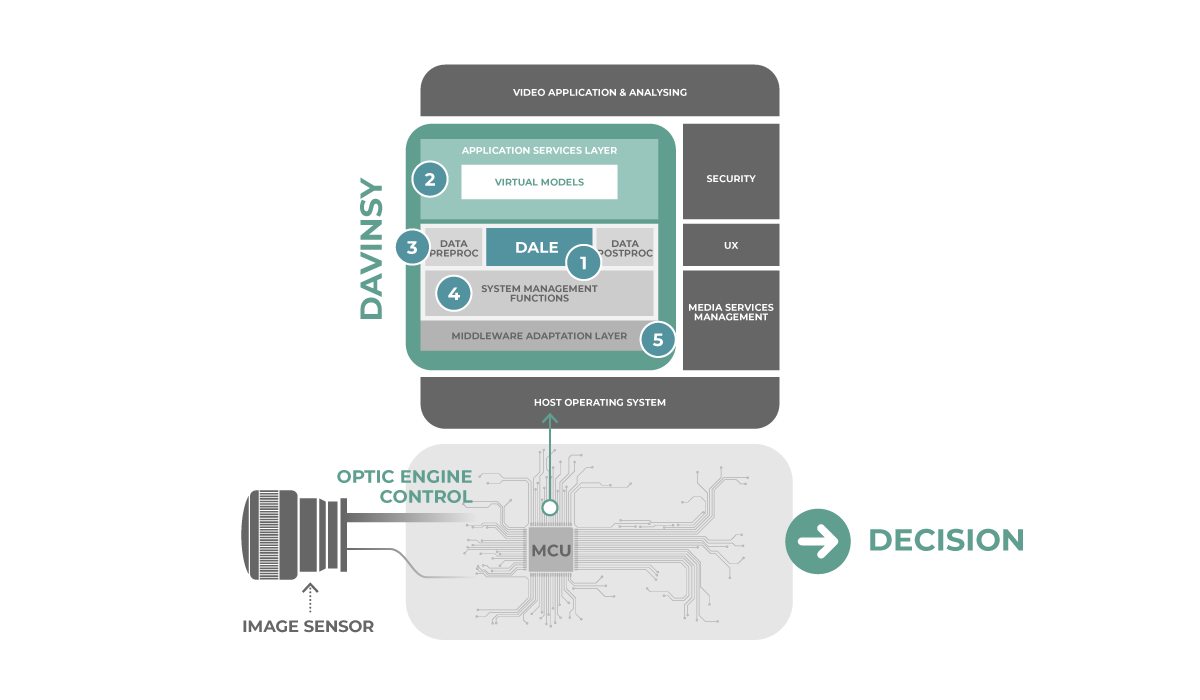

Bondzai’s DavinSy is an embedded software system. DavinSy accelerates the integration of complete deep learning AI workflows in industrial embedded systems.

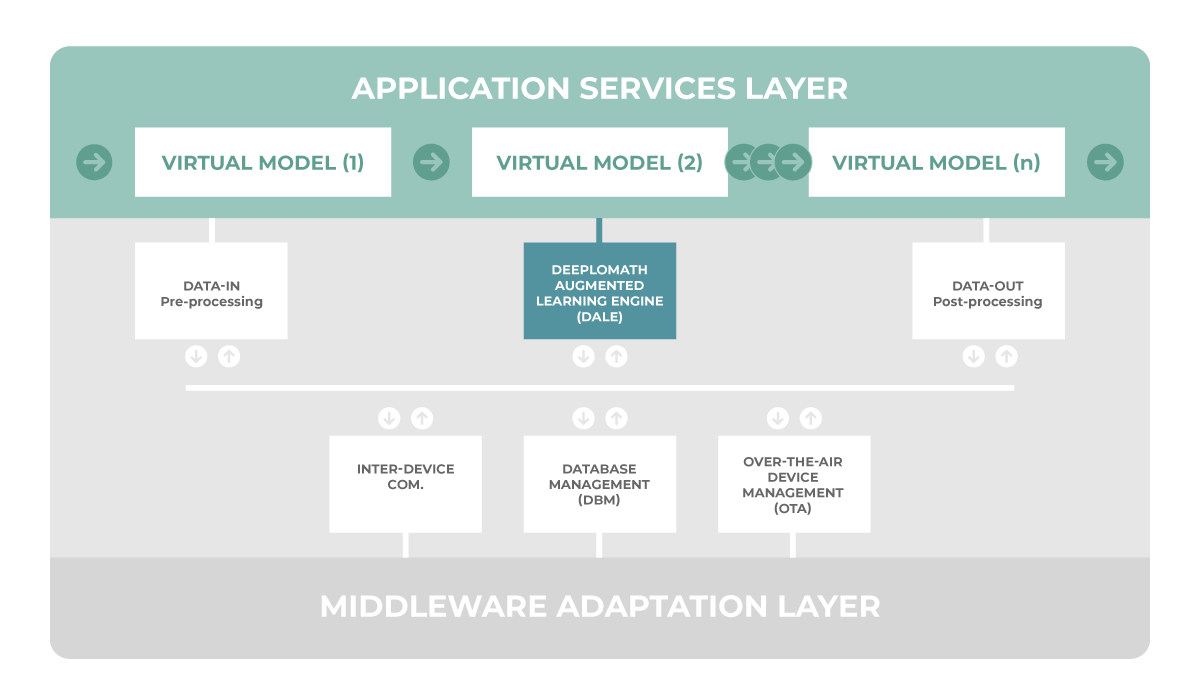

DavinSy continuously learns from real live data to solve the toughest problem with today’s classic static AI models : drift from data over time. To achieve this DavinSy adapts the models just-in-time meeting the variabilities of live data. DavinSy exploits the new concept of Virtual Models as a new programming method proposed in the Application Services Layer (ASL). Virtual Models pilote DALE (Deeplomath Augmented Learning Engine), DavinSy’s internal deep AI . DavinSy achieves polymorphism through locally built models.

If the data structure changes requiring the models to be adapted to a new situation, the models will be re-built automatically by learning directly the structure extracted by DavinSy data pre-processing functions.

The system is a cross-platform software interfaced with most of the embedded environments. DavinSy is built around few major functional blocks and an innovative AI Engine called DALE.

The main components of DavinSy illustrated above are:

Deeplomath Augmented Learning Engine (DALE)

SEE MORE DETAILS

The engine is proprietary and does not require any third-party library. It offers a simple API with only a few parameters to configure. The dataset is built while operating real live data. DALE is fully generic, independent from the problem to solve (regression/classification) and the application domain. The algorithm is certifiable and deterministic with no stochastic component. For each dataset, it constructs the associated deep neural network in one unique pass without any hyper-parameter to tune. The memory footprint of the code is less than 16KBytes.

DALE parameters are limited to the type of problem to solve, the accuracy vs memory tradeoff and the rejection vs acceptance threshold in open-set problems.

Application Services Layer (ASL)

SEE MORE DETAILS

ASL offers a high-level software interface to describe the problems resolution and the conditional sequence. Complex problems are splitted into simpler ones. For example, with static AI models, multi-label problems (e.g. identification of the speaker and the intent) requires large networks difficult to tune. Splitting in two networks is more efficient in term of resource allocation, performance and explainability. Each of these problems is described by the preprocessing and DALE configuration parameters. The concept of the Virtual Model is a new method in DavinSy system to override the behaviour of the DALE engine to achieve polymorphism in regenerated models. Thanks to DALE genericity, ASL can operate in multi-modal mode in the targeted application chaining multiple Virtual Models.

We provide a large open library of preprocessing algorithms, including classical audio, image and inertial DSP transforms (MFCC, Voice Pitch, spectrums, image reshaping….) and upper layers of existing open source Neural Networks as features extractors (ResNET like, voice X-Vectors,…). The library as well contains a set of data-augmentation functions.

The Post-Processing library is a set of application rules, their purpose is to transform different inference results into a final decision.

System Management Functions

Davinsy handles Remote device management (OTA) for monitoring and modular firmware updates. It supports communications between several instances of Davinsy (between different CPU or devices on the same network). It includes the Database Management Module (DBM) handling the dataset, Virtual Models and networks storage, check-points creation, import and export of data for collaboration and roll-back, monitoring, cleaning policy based on data freshness and quality, DALE indicators and memory capacities.